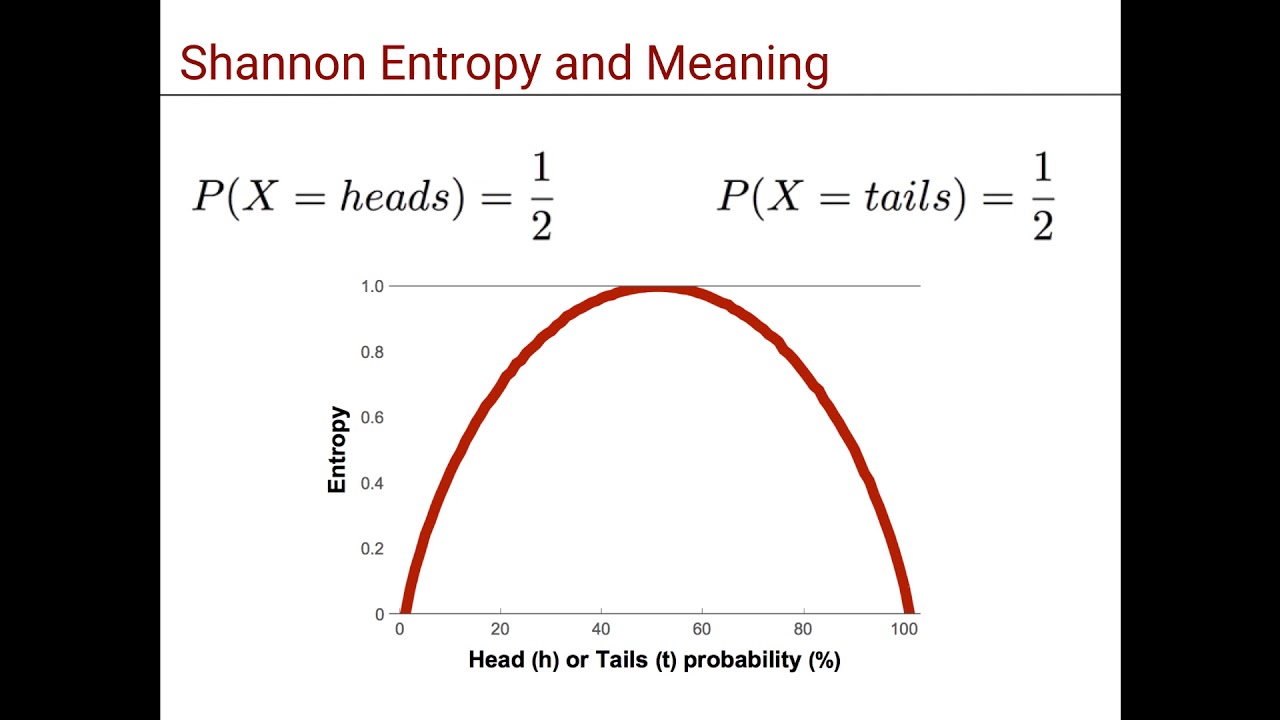

In order to build the entropy formula, we want the opposite, some measure that gives us a low number for Bucket 1, a medium number for Bucket 2, and a high number for Bucket 3. The probability of winning at this game, gives us: Ok, now we have some measure that gives us different values for the three Buckets. For Bucket 1, the probability is 1, for Bucket 2, the probability is 0.105, and for Bucket 3, the probability is 0.0625. In the figures below, we can see the probabilities of winning if we pick each of the three buckets.įor exposition, the following three figures show the probabilities of winning with each of the buckets. For the fourth ball to be blue, the probability is now 1/4, or 0.25.Īs these are independent events, then the probability of the 4 of them to happen, is (3/4)*(3/4)*(3/4)*(1/4) = 27/256, or 0.105.For the third ball to be red, the probability is again 3/4.(Remember that we put the first ball back in the bucket after looking at its color.) For the second ball to be red, the probability is again 3/4.In order for the first ball to be red, the probability is 3/4, or 0.75.What’s the probability of this happening? Well… Now, let’s try to draw the balls to get that sequence, red, red, red, blue. We’re shown the balls in the bucket in some order, so let’s say, they’re shown to us in that precise order, red, red, red, blue. Let’s say for example that we’ve picked Bucket 2, which has 3 red balls, and 1 blue ball. This may sound complicated, but it’s actually very simple. If the colors recorded make the same sequence than the sequence of balls that we were shown at the beginning, then we win 1,000,000 dollars.We then pick one ball out of the bucket, at a time, record the color, and return the ball back to the bucket.We are shown the balls in the bucket, in some order.In this game, we’re given, again, the three buckets to choose. The spoiler is the following: The probability of winning this game, will help us get the formula for entropy. So, in order to cook up a formula, we’ll consider the following game. The idea is, to consider the probability of drawing the balls in a certain way, from each bucket. In the next section, we’ll cook up a formula for entropy. This number of arrangements won’t be part of the formula for entropy, but it gives us an idea, that if there are many arrangements, then entropy is large, and if there are very few arrangements, then entropy is low. Number of rearrangements for the balls in each bucket In his 1948 paper “ A Mathematical Theory of Communication”, Claude Shannon introduced the revolutionary notion of Information Entropy. My goal is to really understand the concept of entropy, and I always try to explain complicated concepts using fun games, so that’s what I do in this post.

So, I spend a LOT of my time researching and studying new opportunities to bring the latest skills, trends, and technologies to our classrooms, and right now, I’m working on something really exciting for an upcoming launch.Įntropy and Information Gain are super important in many areas of machine learning, in particular, in the training of Decision Trees. After all, we can’t possibly teach the most modern and transformative technologies with static content that never changes! Machine Learning is an exciting and ever-changing space, and our program must mirror that if we’re to fulfill our obligation to our students. Given our promise to students that they’ll always be learning the most valuable and the most cutting-edge skills, it’s incumbent upon me to always be looking for ways to upgrade our content. I’m a curriculum developer for the Machine Learning Nanodegree Program at Udacity. Shannon Entropy, Information Gain, and Picking Balls from Buckets

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed